Review — Image Style Transfer Using Convolutional Neural Networks

Image Style Transfer Using VGGNet, Combine Content and Style

Image Style Transfer Using Convolutional Neural Networks

by University of Tübingen, Bernstein Center for Computational Neuroscience, Max Planck Institute for Biological Cybernetics, and Baylor College of Medicine

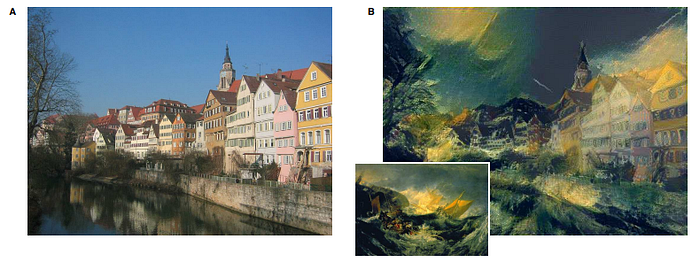

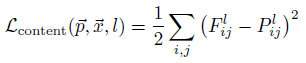

- A Neural Algorithm of Artistic Style is introduced that can separate and recombine the image content and style of natural images.

- The algorithm allows us to produce new images of high perceptual quality that combine the content of an arbitrary photograph with the appearance of numerous well-known artworks.

This is a paper in 2016 CVPR with over 3400 citations. (Sik-Ho Tsang @ Medium)

Outline

- Image Style Transfer

- Style Transfer Algorithm

- Experimental Results

1. Image Style Transfer

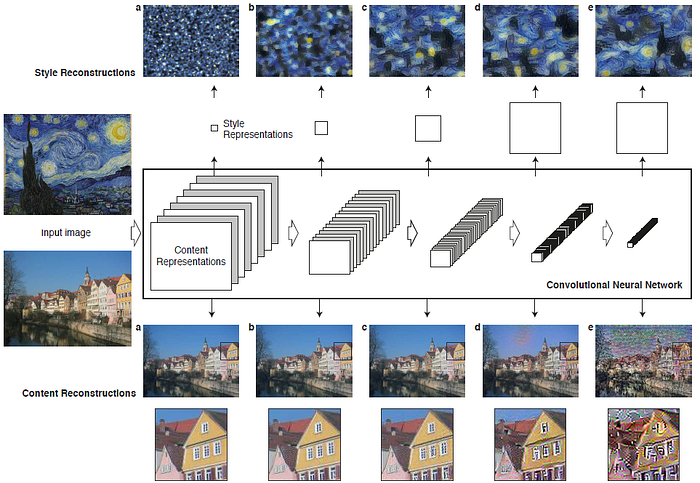

- A given input image is represented as a set of filtered images at each processing stage in the CNN. While the number of different filters increases along the processing hierarchy, the size of the filtered images is reduced by some downsampling mechanism (e.g. max-pooling) leading to a decrease in the total number of units per layer of the network.

1.1. Content Reconstructions

- The input image is reconstructed from from layers ‘conv1 2’ (a), ‘conv2 2’ (b), ‘conv3 2’ (c), ‘conv4 2’ (d) and ‘conv5 2’ (e) of the original VGG-Network.

- It is found that reconstruction from lower layers is almost perfect (a–c). In higher layers of the network, detailed pixel information is lost while the high-level content of the image is preserved (d,e).

1.2. Style Reconstructions

- On top of the original CNN activations, a feature space is used to capture the texture information of an input image.

- The style representation computes correlations between the different features in different layers of the CNN.

- The style of the input image is reconstructed from a style representation built on different subsets of CNN layers ( ‘conv1 1’ (a), ‘conv1 1’ and ‘conv2 1’ (b), ‘conv1 1’, ‘conv2 1’ and ‘conv3 1’ (c), ‘conv1 1’, ‘conv2 1’, ‘conv3 1’ and ‘conv4 1’ (d), ‘conv1 1’, ‘conv2 1’, ‘conv3 1’, ‘conv4 1’ and ‘conv5 1’ (e).

- This creates images that match the style of a given image on an increasing scale while discarding information of the global arrangement of the scene.

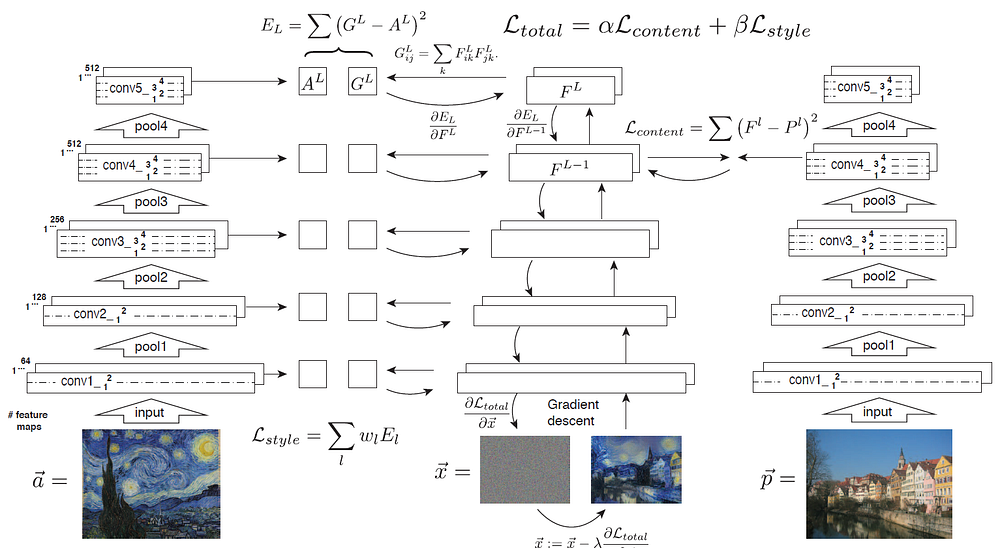

2. Style Transfer Algorithm

2.1. Inputs

- First content and style features are extracted and stored.

- The style image ~a is passed through the network and its style representation Al on all layers included are computed and stored (left).

- The content image ~p is passed through the network and the content representation Pl in one layer is stored (right).

- Then a random white noise image ~x is passed through the network and its style features Gl and content features Fl are computed.

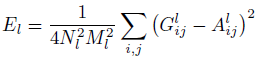

2.2. Style Loss

- On each layer included in the style representation, the element-wise mean squared difference between Gl and Al is computed to give the style loss Lstyle (left):

- where Glij (Gl: Gram Matrix), is the inner product between the vectorised feature maps i and j in layer l:

- And the total loss is:

2.3. Content Loss

- Also the mean squared difference between Fl and Pl is computed to give the content loss Lcontent (right). The total loss Ltotal is then a linear combination between the content and the style loss:

- where Flij is the activation of the ith filter at position j in layer l.

2.4. Output

- Its derivative with respect to the pixel values can be computed using error back-propagation (middle).

- This gradient is used to iteratively update the image ~x until it simultaneously matches the style features of the style image ~a and the content features of the content image ~p (middle, bottom).

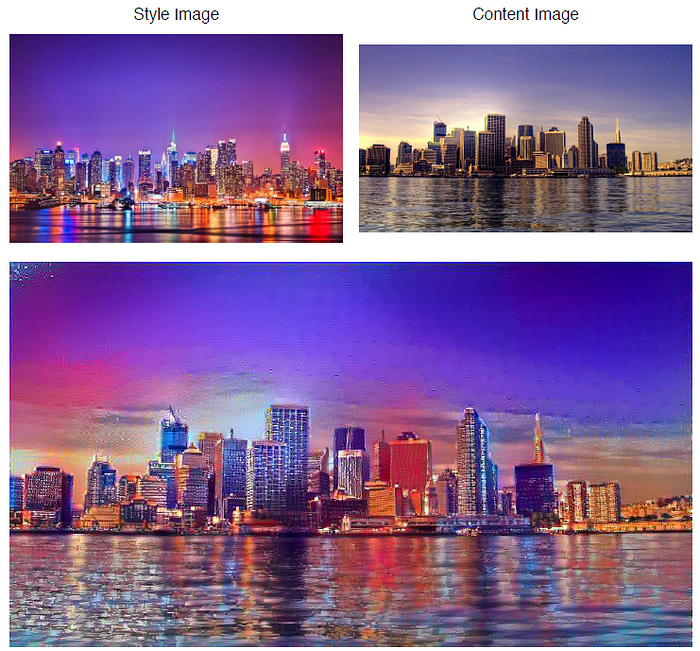

3. Experimental Results

- The style is transferred from a photograph showing New York by night onto a picture showing London by day.

- The images presented in this paper were synthesized in a resolution of about 512×512 pixels and the synthesis procedure could take up to an hour on a Nvidia K40 GPU.

Reference

[2016 CVPR] [Image Style Transfer]

Image Style Transfer Using Convolutional Neural Networks