Brief Review — IBA-U-Net: Attentive BConvLSTM U-Net with Redesigned Inception for medical image segmentation

IBA-U-Net, Uses Dense Block, & LSTM with Attention in U-Net

IBA-U-Net: Attentive BConvLSTM U-Net with Redesigned Inception for medical image segmentation,

IBA-U-Net, by Nanchang University, and Carleton University, 2021 J. Comput. Biology and Medicine, Over 10 Citations (Sik-Ho Tsang @ Medium)

Medical Imaging, Medical Image Analysis, Image Segmentation, U-Net4.2. Biomedical Image Segmentation

2015–2021 [Expanded U-Net] [3-D RU-Net] [nnU-Net] [TransUNet] [CoTr] [TransBTS] [Swin-Unet] [Swin UNETR] [RCU-Net] 2022 [UNETR]

My Other Previous Paper Readings Also Over Here

- The encoder-decoder Attentive BConvLSTM U-Net with Redesigned Inception (IBA-U-Net) is proposed.

- Integrating the BConvLSTM block and the Attention block to reduce the semantic gap between the encoder and decoder feature maps to make the two feature maps more homogeneous.

- Factorizing convolutions with a large filter size by Redesigned Inception, which uses a multiscale feature fusion method to significantly increase the effective receptive field.

Outline

- Attentive BConvLSTM U-Net with Redesigned Inception (IBA-U-Net)

- Results

1. Attentive BConvLSTM U-Net with Redesigned Inception (IBA-U-Net)

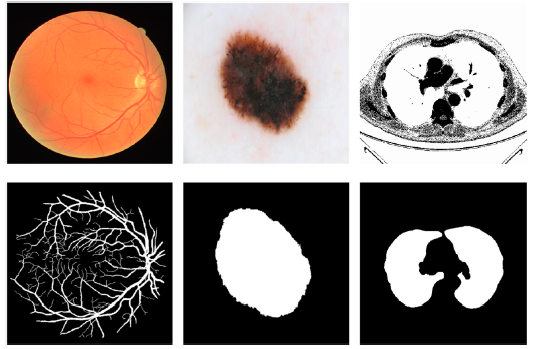

- Medical image segmentation, using encoder-decoder architecture, such as U-Net, combine local information based on high-resolution images and low-resolution global features to distinguish foreground and background, as above.

- Redesigned Inception (RI) block is proposed to enhance the features throughout the network.

- (It is assumed that we know inception block, dense block, LSTM, and attention operation below.)

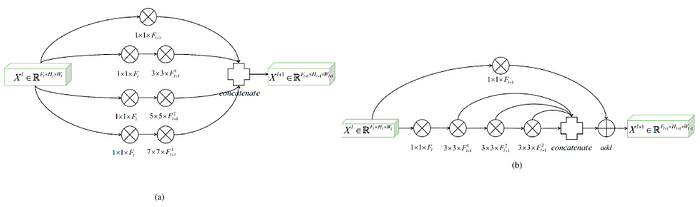

1.1. Redesigned Inception (RI) Block

- In original Inception block, independent convolutional paths are used to extract features with different receptive fields.

- The proposed RI block uses Dense block concept as in DenseNet to extract the multiscale details of different scales, and it is used to replace the traditional two 3×3 convolutional layers in U-Net.

1.2. Attentive BConvLSTM Block

- Instead of the simple skip connection in U-Net, the proposed Attentive BConvLSTM block, integrating the BConvLstm block and the Attention block, is used to reduce the semantic gap between the encoder and decoder feature maps.

- First, the BConvLSTM block provides hidden state tensors for the Attention block.

- Then, the Attention block pays different degrees of attention to the hidden state tensors output by BConvLSTM to highlight the salient features in skip connections.

2. Results

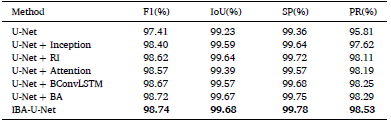

2.1. Lung Segementation

The IBA-U-Net achieves 99.55% in Sen, 99.73% in Acc and 99.45% in Auc, outperforms the state-of-the-art methods on the lung segmentation dataset using different performance metrics.

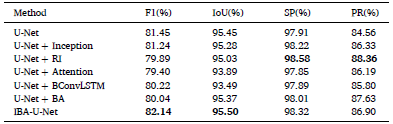

- Adding the RI block and BA block to the original U-Net greatly increased the segmentation accuracy.

- U-Net + RI exceeds U-Net + Inception in all four indicators, which shows that the proposed RI block is more suitable for medical image segmentation.

- The U-Net + BA block also exceeds only using U-Net + Attention or U-Net + BConvLSTM.

The proposed IBA-U-Net network showed the best performance, among which the F1-Score and precision significantly improved.

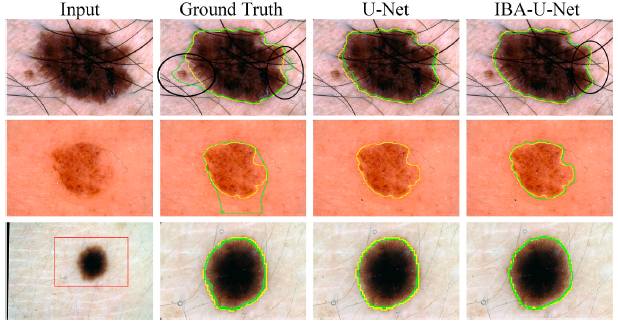

U-Net has many incorrect segmentations (areas indicated by red ovals), whereas IBA-U-Net segments the images reliably without making false predictions.

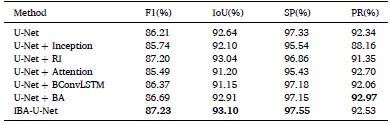

2.4. Skin Disease Segmentation

IBA-U-Net achieves 82.91% in Sen, 94.40% in Acc and 90.13% in Auc, which are better than other methods on the skin disease dataset.

The proposed network surpasses U-Net in all four indicators, proving that the network proposed in this paper has a good performance on challenging.

- When the background and the segmented image are similar in color, U-Net makes some incorrect predictions. The rougher the background, the more incorrect predictions U-Net makes.

At the same time, U-Net + BA and IBA-U-Net show finer segmentation.

2.5. Retinal Vessel Segmentation

IBA-U-Net achieves 78.58% in Sen, 95.50% in Acc and 97.78% in AUC, which outperforms the other methods.

The segmentation indicators of IBA-U-Net and U-Net are not much different.

- There are some small background pixels in the ground truth. The U-Net + BA network and IBA-U-Net network successfully segmented part of the background pixels, but U-Net often missed them.

In addition, the results of IBA-U-Net segmentation show that the network is more immune to noise in the image.

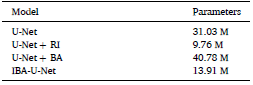

The addition of the RI block can greatly reduce the network parameters while increasing the F1-Score and intersection over union.