Brief Review — SNGAN: Spectral Normalization for Generative Adversarial Networks

SNGAN, Spectual Normalization for Weight Matrix, Outperforms WGAN-GP

Spectral Normalization for Generative Adversarial Networks

SNGAN, by Preferred Networks, Inc., Ritsumeikan University, and National Institute of Informatics

2018 ICLR, Over 4200 Citations (Sik-Ho Tsang @ Medium)Generative Adversarial Network (GAN)

Image Synthesis: 2014 … 2019 [SAGAN]

==== My Other Paper Readings Are Also Over Here ====

- A novel weight normalization technique, spectral normalization, is proposed to stabilize the training of the discriminator. It is computationally light and easy to incorporate into existing implementations.

Outline

- SNGAN

- Results

1. SNGAN

The spectral normalization controls the Lipschitz constant of the discriminator function f by literally constraining the spectral norm of each layer g: hin → hout.

- σ(A) is the spectral norm of the matrix A (L2 matrix norm of A), which is the maximum singular value.

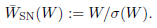

- Then the weight matrix is normalized by σ(A):

- After normalized, σ(.) will give the value of 1:

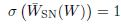

- For fast computation, power iteration method is applied for spectual normalization:

- The change in W at each update would be small, and hence the change in its largest singular value. The spectral norm of W is approximated with the pair of so-approximated singular vectors:

2. Results

2.1. Inception Score and FID

SNGANs performed better than almost all contemporaries on the optimal settings. SNGANs performed even better with hinge loss.

2.2. Visual Quality

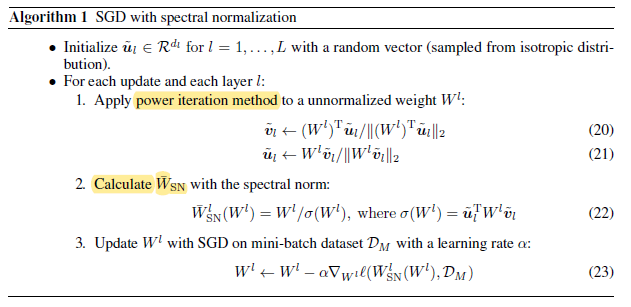

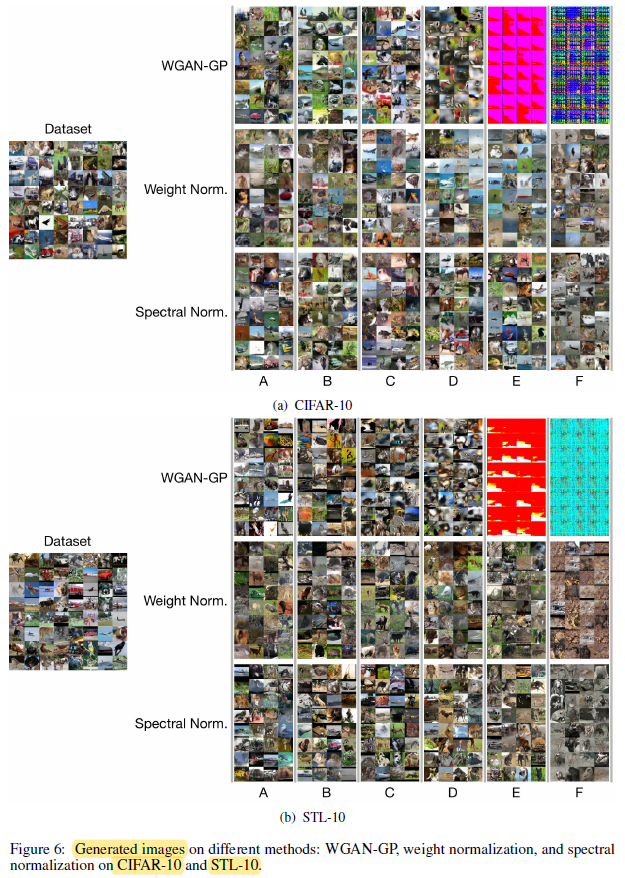

- A-F: are different sets of Hyperparameter settings for GAN training.

- WGAN-GP failed to train good GANs with high learning rates and high momentums (D,E and F).

SN-GANs were consistently better than GANs with weight normalization in terms of the quality of generated images.