Review — DSOD: Learning Deeply Supervised Object Detectors from Scratch (Object Detection)

1st Detection Network Trained From Scratch, Modified From SSD, Using Dense Blocks From DenseNet, Outperforms SSD, YOLOv2, Faster R-CNN, R-FCN, ION

In this story, DSOD: Learning Deeply Supervised Object Detectors from Scratch, (DSOD), by Fudan University, Tsinghua University, and Intel Labs China, is reviewed. In this paper:

- Deeply Supervised Object Detector (DSOD) is designed, where a set of design principles is defined which help to train from scratch.

- One of the key findings is the uses of deep supervision, and the dense layer-wise connections.

This is the paper in 2017 ICCV with over 300 citations. (Sik-Ho Tsang @ Medium)

Outline

- DSOD: Network Architecture

- A Set of Principles to Train from Scratch

- Ablation Study

- Experimental Results

- Discussions

1. DSOD: Network Architecture

- The proposed DSOD method is a multi-scale proposal-free detection framework similar to SSD. (Please feel free to visit SSD if interested.)

- Proposal-free means there are no region proposal network (RPN) such as the one in Faster R-CNN or R-FCN.

- The network structure of DSOD can be divided into two parts: the backbone sub-network for feature extraction and the front-end sub-network for prediction over multi-scale response maps.

1.1. Backbone Subnetwork

- The backbone sub-network is a variant of the deeply supervised DenseNets structure, which is composed of a stem block, four dense blocks, two transition layers and two transition w/o pooling layers.

1.2. Front-End Sub-Network

- The front-end sub-network (or named DSOD prediction layers) fuses multi-scale prediction responses with an elaborated dense structure.

- The above table shows the details of the DSOD network architecture.

- Same as SSD, smooth L1 loss is used for localization purpose and softmax loss is used for classification purpose.

2. A Set of Principles to Train from Scratch

2.1. Principle 1: Proposal-Free

- It is observed that only the proposal-free method can converge successfully without the pre-trained models (while those networks with RPN cannot).

- The proposal-based methods work well with pre-trained network models because the parameter initialization is good for those layers before RoI pooling, while this is not true for training from scratch.

2.2. Principle 2: Deep Supervision

- Deep supervision with an elegant & implicit solution called dense layer-wise connection, as introduced in DenseNets.

- Earlier layers in DenseNet can receive additional supervision from the objective function through the skip connections.

- Moreover, transition w/o Pooling Layer is used, i.e. without reducing the final feature map resolution.

- The transition w/o pooling layer eliminates this restriction of the number of dense blocks in DSOD.

2.3. Principle 3: Stem Block

- The stem block as a stack of three 3×3 convolution layers followed by a 2×2 max pooling layer, which improves the detection performance.

- Compared with the original design in DenseNet (7×7 conv-layer, stride = 2 followed by a 3×3 max pooling, stride = 2), the stem block can reduce the information loss from raw input images.

2.4. Principle 4: Dense Prediction Structure

- As shown in the figure above, for 300×300 input images, six scales of feature maps are generated.

- The Scale-1 feature maps have the largest resolution (38×38) in order to handle the small objects in an image.

- Then, a plain transition layer with the bottleneck structure (a 1×1 conv-layer for reducing the number of feature maps plus a 3×3 conv-layer) is adopted between two contiguous scales of feature maps.

- In the plain structure as in SSD, each later scale is directly transited from the adjacent previous scale. In contrast, the dense structure for prediction, fuses multi-scale information for each scale.

- In DSOD, in each scale (except scale-1), half of the feature maps are learned from the previous scale with a series of conv-layers, while the remaining half feature maps are directly down-sampled from the contiguous high-resolution feature maps.

- i.e. each scale only learns half of new feature maps and reuse the remaining half of the previous ones. This dense prediction structure can yield more accurate results with fewer parameters than the plain structure.

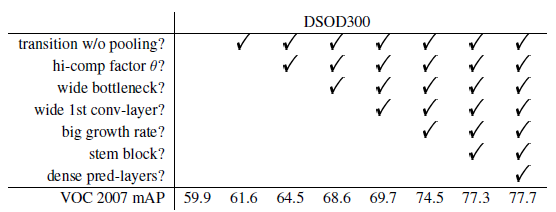

3. Ablation Study

- DSOD300 (with 300×300 inputs) is used.

- The models are trained with the combined training set from VOC 2007 trainval and 2012 trainval (“07+12”), and test on the VOC 2007 testset.

- DS/A-B-k-θ describes the backbone network structure.

- A denotes the number of channels in the 1st conv-layer.

- B denotes the number of channels in each bottleneck layer (1×1 convolution).

- k is the growth rate in dense blocks.

- θ denotes the compression factor in transition layers.

3.1. Configurations in Dense Blocks

- Compression Factor in Transition Layers: Compression factor θ= 1 means that there is no feature map reduction in the transition layer, while θ = 0.5 means half of the feature maps are reduced. Results show that θ = 1 yields 2.9% higher mAP than θ = 0.5.

- # Channels in bottleneck layers: Wider bottleneck layers (with more channels of response maps) improve the performance greatly (4.1% mAP).

- # Channels in the 1st conv-layer: A large number of channels in the first conv-layers is beneficial, which brings 1.1% mAP improvement.

- Growth rate: A large growth rate k is found to be much better. 4.8% mAP improvement is observed when increase k from 16 to 48 with 4k bottleneck channels.

3.2. Effectiveness of Design Principles

- Proposal-free Framework: For the 2-stage Faster R-CNN and R-FCN, the training process failed to converge for all the network structures that are attempted (VGGNet, ResNet, DenseNet).

- Using SSD, the training converged successfully but gives much worse results (69.6% for VGGNet).

- (The results are shown in the next table at the next section.)

- Deep Supervision: DSOD300 achieves 77.7% mAP. It is also much better than the fine-tuned results by SSD300 (75.8%).

- (The results are shown in the next table at the next section.)

- Transition w/o Pooling Layer: The network structure with the Transition w/o pooling layer brings 1.7% performance gain.

- Stem Block: The stem block improves the performance from 74.5% to 77.3%.

- Dense Prediction Structure: DSOD with dense front-end structure runs slightly lower than the plain structure (17.4 fps vs. 20.6 fps) on a Titan X GPU. However, the dense structure improves mAP from 77.3% to 77.7%, while reduces the parameters from 18.2M to 14.8M.

- What if pre-training on ImageNet?: One lite backbone network DS/64–12–16–1 on ImageNet, which obtains 66.8% top-1 accuracy and 87.8% top-5 accuracy on the validation-set. After fine-tuning, 70.3% mAP is obtained on the VOC 2007 test-set.

- The corresponding training-from-scratch solution achieves 70.7% accuracy, which is even slightly better.

3.3. Runtime Analysis

- With 300×300 input, full DSOD can process an image in 48.6ms (20.6 fps) on a single Titan X GPU with the plain prediction structure, and 57.5ms (17.4 fps) with the dense prediction structure.

- As a comparison, R-FCN runs at 90ms (11 fps) for ResNet-50 and 110ms (9 fps) for ResNet-101.

- The SSD300* runs at 82.6ms (12.1 fps) for ResNet-101 and 21.7ms (46 fps) for VGGNet.

- In addition, DSOD uses about only 1/2 parameters to SSD300 with VGGNet, 1/4 to SSD300 with ResNet-101, 1/4 to R-FCN with ResNet-101 and 1/10 to Faster R-CNN with VGGNet.

- A lite-version of DSOD (10.4M parameters, w/o any speed optimization) can run 25.8 fps with only 1% mAP drops.

- (The results are shown in the next table at the next section.)

4. Experimental Results

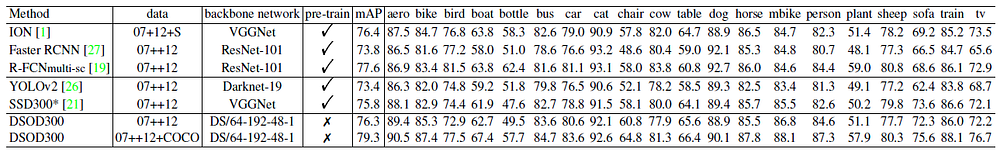

4.1. PASCAL VOC2007

- Models are trained based on the union of VOC 2007 trainval and VOC 2012 trainval (“07+12”).

- A batch size of 128 is used, by accumulating gradients over two training iterations. Otherwise, memory is not enough.

- DSOD300 with plain connection achieves 77.3%, which is slightly better than SSD300* (77.2%), outperforms YOLOv2.

- DSOD300 with dense prediction structure improves the result to 77.7%.

- After adding COCO as training data, the performance is further improved to 81.7%.

4.2. PASCAL VOC2012

- VOC 2012 trainval and VOC 2007 trainval + test are used for training, and then tested on VOC 2012 test set.

- DSOD300 achieves 76.3% mAP, which is consistently better than SSD300* (75.8%), YOLOv2 (73.4%), Faster R-CNN (73.8%),

- With COCO used for training, DSOD300 (79.3%) outperforms ION (76.4%) and R-FCNmulti-sc (77.6%).

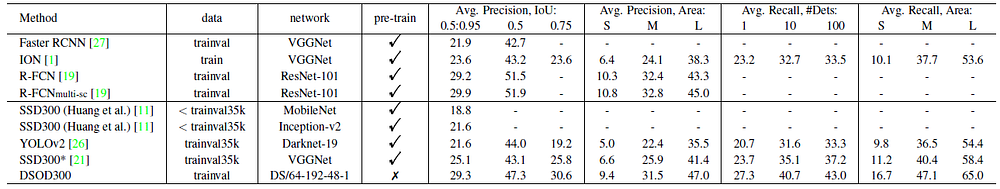

4.3. MS COCO

- The trainval set (train set + validation set) is used for training.

- DSOD300 achieves 29.3%/47.3% (overall mAP/mAP @ 0.5) on the test-dev set, which outperforms the baseline SSD300* with a large margin.

- The result is comparable to the single-scale R-FCN, and is close to the R-FCNmulti-sc which uses ResNet-101 as the pre-trained model.

- Interestingly, DSOD result with 0.5 IoU is lower than R-FCN, but DSOD [0.5:0.95] result is better or comparable.

- This indicates that the predicted locations are more accurate than R-FCN under the larger overlap settings. It is reasonable that the small object detection precision is slightly lower than R-FCN since our input image size (300×300) is much smaller than R-FCN’s (600×1000).

5. Discussions

- Based on the above results, some discussions are brought out.

5.1. Better Model Structure vs. More Training Data

- A better model structure might enable similar or better performance compared with complex models trained from large data.

Particularly, DSOD is only trained with 16,551 images on VOC 2007, but achieves competitive or even better performance than those models trained with 1.2 million + 16,551 images.

5.2. Why Training from Scratch?

First, there may be big domain differences from pre-trained model domain to the target one.

Second, model fine-tuning restricts the structure design space for object detection networks.

5.3. Model Compactness vs. Performance

- Thanks to the parameter-efficient dense block, the model is much smaller than most competitive methods.

For instance, the smallest dense model (DS/64–64–16–1, with dense prediction layers) achieves 73.6% mAP with only 5.9M parameters, which shows great potential for applications on low-end devices.

Reference

[2017 ICCV] [DSOD]

DSOD: Learning Deeply Supervised Object Detectors from Scratch

Object Detection

2014: [OverFeat] [R-CNN]

2015: [Fast R-CNN] [Faster R-CNN] [MR-CNN & S-CNN] [DeepID-Net]

2016: [CRAFT] [R-FCN] [ION] [MultiPathNet] [Hikvision] [GBD-Net / GBD-v1 & GBD-v2] [SSD] [YOLOv1]

2017: [NoC] [G-RMI] [TDM] [DSSD] [YOLOv2 / YOLO9000] [FPN] [RetinaNet] [DCN / DCNv1] [Light-Head R-CNN] [DSOD]

2018: [YOLOv3] [Cascade R-CNN] [MegDet] [StairNet]

2019: [DCNv2] [Rethinking ImageNet Pre-training]