Review: Neural Machine Translation in Linear Time (ByteNet)

Character-Level Machine Translation Using CNN, Outperforms Character-Level GNMT, Slightly Worse Than Wordpiece-Level GNMT

In this story, Neural Machine Translation in Linear Time, (ByteNet), by Google Deepmind, London UK, is briefly reviewed. In this paper:

- The ByteNet is introduced, which is a one-dimensional convolutional neural network (CNN). And it is composed of two parts, one to encode the source sequence and the other to decode the target sequence.

- ByteNet is a character-level Neural Machine Translation (NMT) approach, which means that it performs translation character by character (not word by word).

This is a paper in 2016 arXiv with over 400 citations. (Sik-Ho Tsang @ Medium)

Outline

- ByteNet Architecture

- Some Details of CNN

- Character-Level Machine Translation Results

1. ByteNet Architecture

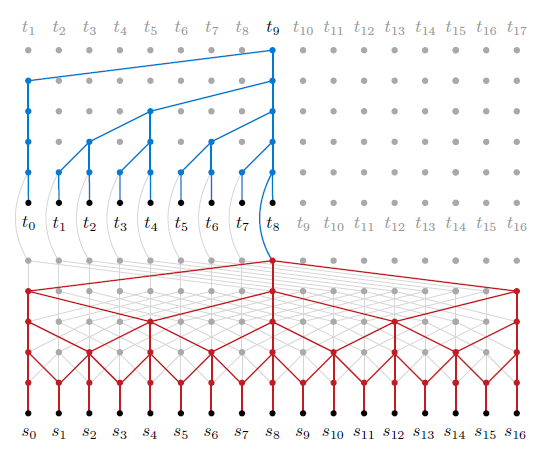

1.1. Encoder-Decoder Stacking

- The target decoder (blue) is stacked on top of the source encoder (red). The decoder generates the variable-length target sequence using dynamic unfolding.

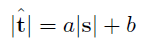

1.2. Dynamic Unfolding

- Given source and target sequences s and t with respective lengths |s| and |t|, one first chooses a sufficiently tight upper bound |^t| on the target length |t| as a linear function of the source length |s|:

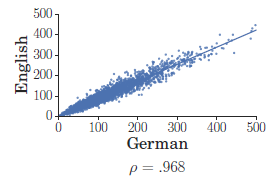

- a=1.20 and b=0 when translating from English into German, as German sentences tend to be somewhat longer than their English counterparts.

- In this manner the representation produced by the encoder can be efficiently computed, while maintaining high bandwidth and being resolution-preserving.

1.3. Input Embedding Tensor

- Given the target sequence t=t0, …, tn, the ByteNet decoder embeds each of the first n tokens t0, …, tn-1 via a look-up table (the n tokens t0, …, tn serve as targets for the predictions).

- The resulting embeddings are concatenated into a tensor of size n×2d where d is the number of inner channels in the network.

2. Some Details of CNN

2.1. Masked One-dimensional Convolutions

- The decoder applies masked one-dimensional convolutions.

- The masking ensures that information from future tokens does not affect the prediction of the current token.

- The operation can be implemented either by zeroing out some of the weights of a wider kernel of size 2k-1 or by padding the input map.

2.2. Dilation

- The masked convolutions use dilation to increase the receptive field of the target network.

- A dilation scheme is used whereby the dilation rates are doubled every layer up to a maximum rate r (for our experiments r=16).

- The scheme is repeated multiple times in the network always starting from a dilation rate of 1.

2.3. Residual Blocks

- Each layer is wrapped in a residual block that contains additional convolutional layers with filters of size 1×1 (He et al., 2016).

- Two variants of the residual blocks: one with ReLUs, which is used in the machine translation experiments, and one with Multiplicative Units.

- Layer normalization (Ba et al., 2016) is used before the activation function.

- After a series of residual blocks of increased dilation, the network applies one more convolution and ReLU followed by a convolution and a final softmax layer.

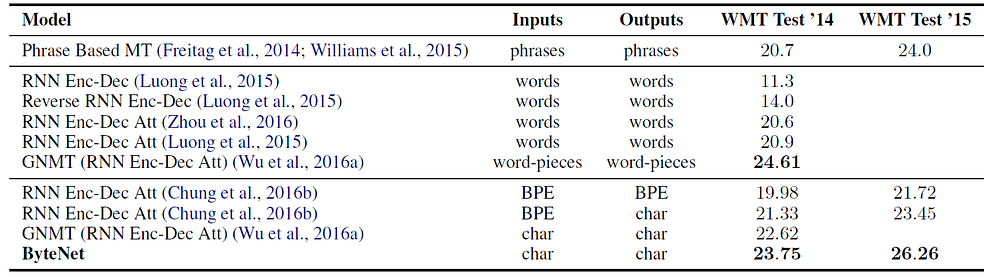

3. Character-Level Machine Translation Results

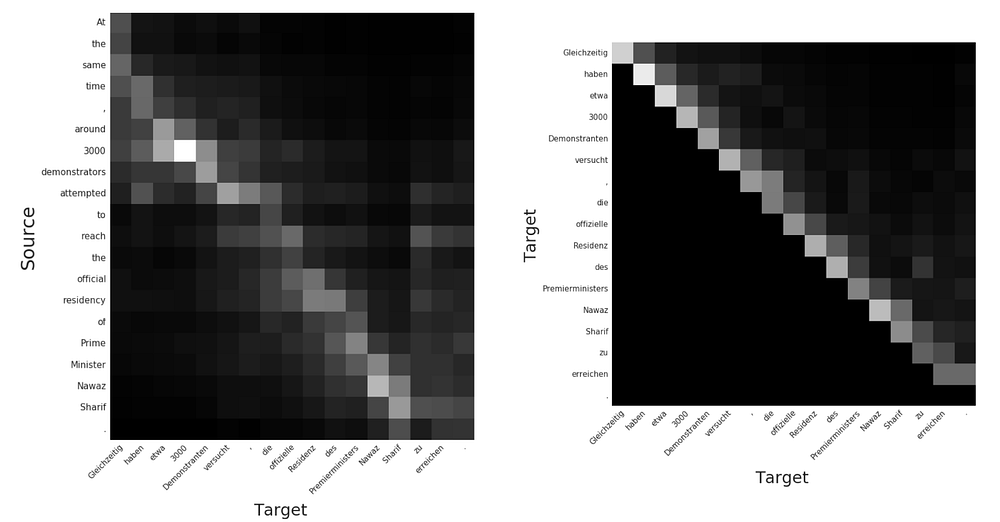

- The full ByteNet is evaluated on the WMT English to German translation task. NewsTest 2013 is used for validation and NewsTest 2014 and 2015 are used for testing.

- 323 characters are kept in the German vocabulary and 296 characters are kept in the English vocabulary.

- The ByteNet has 30 residual blocks in the encoder and 30 residual blocks in the decoder.

- The residual blocks are arranged in sets of five with corresponding dilation rates of 1, 2, 4, 8 and 16.

- The number of hidden units d is 800. The size of the kernel in the source network is 3, whereas the size of the masked kernel in the target network is 3.

On NewsTest 2014, the ByteNet achieves the highest performance in character-level and subword-level neural machine translation.

Compared to the word-level systems it is second only to the version of GNMT that uses word-pieces.

On NewsTest 2015, ByteNet achieves the best published results to date.

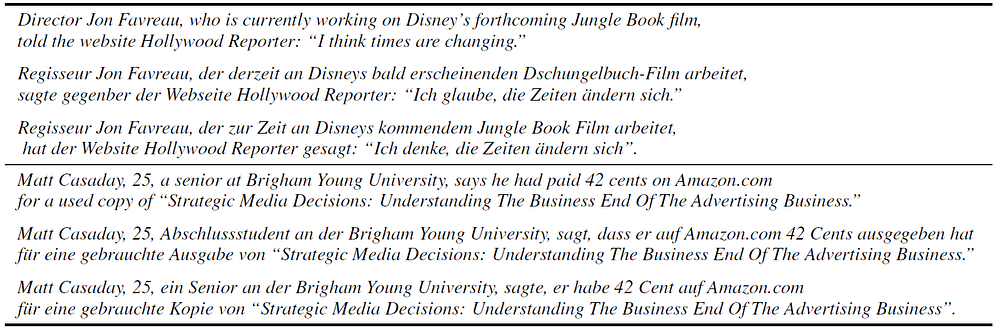

- The above table contains some of the unaltered generated translations from the ByteNet that highlight reordering and other phenomena such as transliteration.

Reference

[2016 arXiv] [ByteNet]

Neural Machine Translation in Linear Time

Natural Language Processing (NLP)

Sequence Model: 2014 [GRU] [Doc2Vec]

Language Model: 2007 [Bengio TNN’07] 2013 [Word2Vec] [NCE] [Negative Sampling]

Sentence Embedding: 2015 [Skip-Thought]

Machine Translation: 2014 [Seq2Seq] [RNN Encoder-Decoder] 2015 [Attention Decoder/RNNSearch] 2016 [GNMT] [ByteNet]

Image Captioning: 2015 [m-RNN] [R-CNN+BRNN] [Show and Tell/NIC]