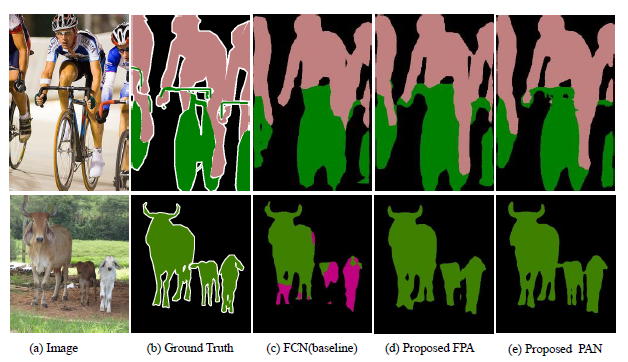

Review — PAN: Pyramid Attention Network for Semantic Segmentation (Semantic Segmentation)

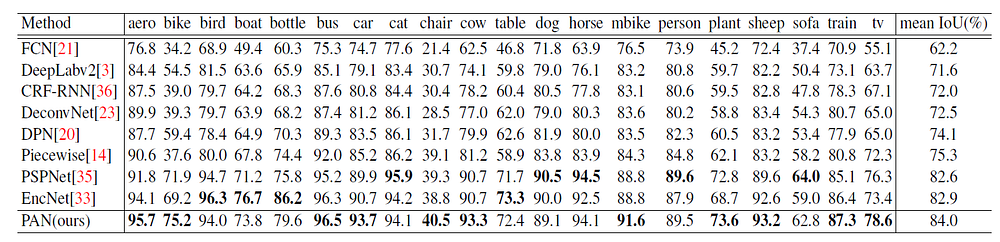

Using FPA & GAU Modules, Outperforms FCN, DeepLabv2, CRF-RNN, DeconvNet, DPN, PSPNet, DPN, DeepLabv2, RefineNet, DUC, and PSPNet.

In this story, Pyramid Attention Network for Semantic Segmentation, (PAN), by Beijing Institute of Technology, Megvii Inc. (Face++), and Peking University, is reviewed. In this paper:

- Feature Pyramid Attention (FPA) module is introduced to perform spatial pyramid attention structure on high-level output and combine global pooling to learn a better feature representation

- Global Attention Upsample (GAU) module is introduced on each decoder layer to provide global context as a guidance of low-level features to select category localization details.

This is a paper in 2018 BMVC with over 200 citations. (Sik-Ho Tsang @ Medium)

Outline

- PAN: Network Architecture

- Feature Pyramid Attention (FPA) Module

- Global Attention Upsample (GAU) Module

- Ablation Study

- Experimental Results

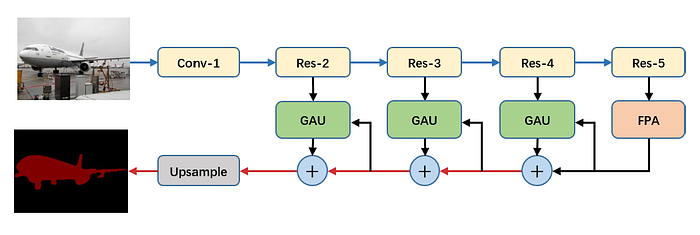

1. PAN: Network Architecture

- ImageNet Pretrained ResNet-101, with dilated convolution (Originally from DeepLab, or DilatedNet), is used as baseline.

- Dilated convolution with rate of 2 is applied to res5b blocks, so the output size of feature maps from ResNet is 1/16 of the input image like DeepLabv3+.

- The 7×7 convolutional layer in the original ResNet-101 is replaced by three 3×3 convolutional layers like PSPNet and DUC.

- The FPA module is used to gather dense pixel-level attention information from the output of ResNet. Combined with global context, the final logits are follow by GAU module to generate the final prediction maps.

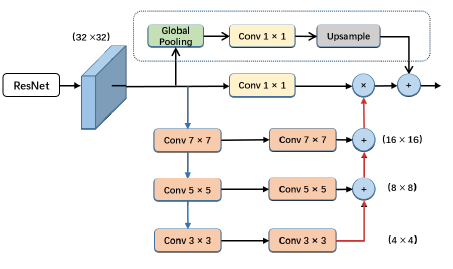

2. Feature Pyramid Attention (FPA) Module

- FPA fuses features from under three different pyramid scales by implementing a U-shape structure like Feature Pyramid Network (FPN).

- To better extract context from different pyramid scales, we use 3×3, 5×5, 7×7 convolution in pyramid structure respectively.

- Since the resolution of high-level feature maps is small, using large kernel size doesn’t bring too much computation burden.

- Then the pyramid structure integrates information of different scales step-by-step, which can incorporate neighbor scales of context features more precisely.

- In addition, the origin features from CNNs is multiplied pixel-wisely by the pyramid attention features after passing through a 1×1 convolution.

- Global average pooling branch, originated in SENet, is also introduced to add with the output features.

Feature Pyramid Attention (FPA) module can fuse different scale context information and produce better pixel-level attention for high-level feature maps in the meantime.

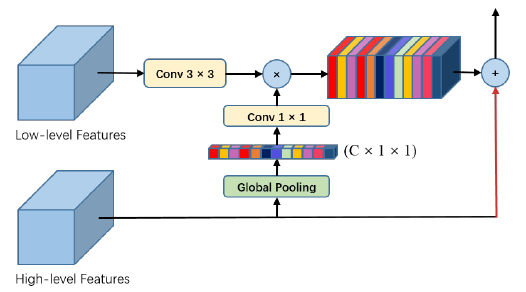

3. Global Attention Upsample (GAU) Module

- GAU performs global average pooling to provide global context as a guidance of low-level features to select category localization details.

- In detail, 3×3 convolution is performed on the low-level features to reduce channels of feature maps from CNNs.

- The global context generated from high-level features is through a 1×1 convolution with batch normalization and ReLU non-linearity, then multiplied by the low-level features.

- Finally, high-level features are added with the weighted low-level features and upsampled gradually.

GAU deploys different scale feature maps more effectively and uses high-level features provide guidance information to low-level feature maps in a simple way.

4. Ablation Study

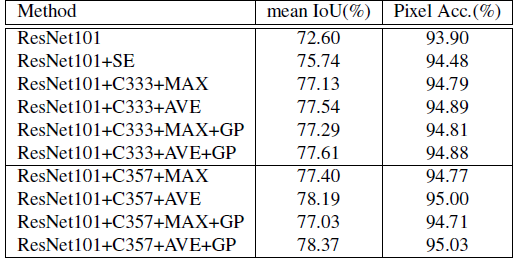

4.1. FPA Module

- C333: represent all the kernel size of convolution is 3×3.

- C357: means the kernel size of convolution is 3×3, 5×5, 7×7 respectively.

- MAX and AVE: represent max pooling and average pooling operations.

- GP: Global pooling branch.

- For using all the convolution with 3×3 kernel size, ‘AVE’ setting improves the performance from 77.13% to 77.54% compared to ‘MAX’ setting.

- Compared to SENet attention module, ‘C333’ and ‘AVE’ setting improves the performance by almost 1.8%.

- Using large kernel convolution ‘C357’ to replace 3×3 kernel size, the performance is improved from 77.54% to 78.19%.

- By further adding global pooling branch, finally, the best setting yields results 78.37%/95.03 in terms of Mean IoU and Pixel Acc. (%).

- Comparing with PSPNet and DeepLabv3, FPA module outperforms PSPNet and DeepLabv3 by about 1.5% and 1.2% respectively.

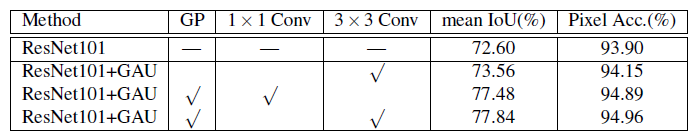

4.2. GAU Module

- Without global context attention branch, GAU merely improves performance from 72.60% to 73.56%.

- With global pooling operation to extract global context attention information, which improves performance from 73.56% to 77.84% significantly.

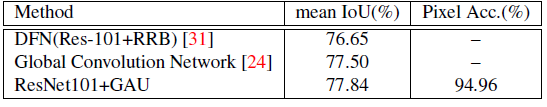

- GAU module outperforms RRB by 1.2%.

- It is worth noted that Global Convolution Network (GCN) used extra COCO dataset combined with VOC dataset for training and obtained 77.50%, while GAU module can achieve 77.84% without COCO dataset for training.

5. Experimental Results

5.1. PASCAL VOC 2012

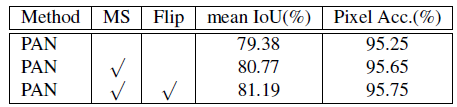

- With the multi-scale inputs (with scales= {0.5, 0.75, 1.0, 1.25, 1.5, 1.75}) and also left-right flipped the images in evaluation, 81.19% mIOU is achieved.

Reference

[2018 BMVC] [PAN]

Pyramid Attention Network for Semantic Segmentation

Semantic Segmentation

2015: [FCN] [DeconvNet] [DeepLabv1 & DeepLabv2] [CRF-RNN] [SegNet] [DPN]

2016: [ENet] [ParseNet] [DilatedNet]

2017: [DRN] [RefineNet] [ERFNet] [GCN] [PSPNet] [DeepLabv3] [LC] [FC-DenseNet] [IDW-CNN] [DIS] [SDN]

2018: [ESPNet] [ResNet-DUC-HDC] [DeepLabv3+] [PAN]

2019: [ResNet-38] [C3] [ESPNetv2]

2020: [DRRN Zhang JNCA’20]