Brief Review — A Comprehensive Survey on Heart Sound Analysis in the Deep Learning Era

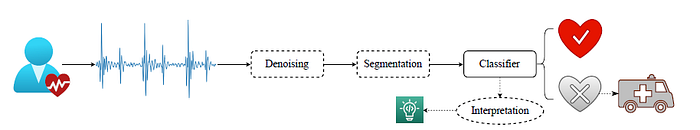

Overview of Heart Sound Classification Using Deep Learning

A Comprehensive Survey on Heart Sound Analysis in the Deep Learning Era

HSC Using DL Overview, by Leibniz University Hannover, Imperial College London, Griffith University, Beijing Institute of Technology, University of Augsburg

2023 arXiv v1 (Sik-Ho Tsang @ Medium)Heart Sound Classification

2013 … 2023 [2LSTM+3FC, 3CONV+2FC] [NRC-Net] [Log-MelSpectrum + Modified VGGNet] [CNN+BiGRU] [CWT+MFCC+DWT+CNN+MLP] [LSTM U-Net (LU-Net)]

==== My Other Paper Readings Are Also Over Here ====

- Most review works about heart sound analysis were given before 2017, the present survey is the first to work on a comprehensive overview to summarise papers on heart sound analysis with deep learning in the past six years 2017–2022.

Outline

- Categories

- DL-Based Classification Models

- Datasets

- Future Directions

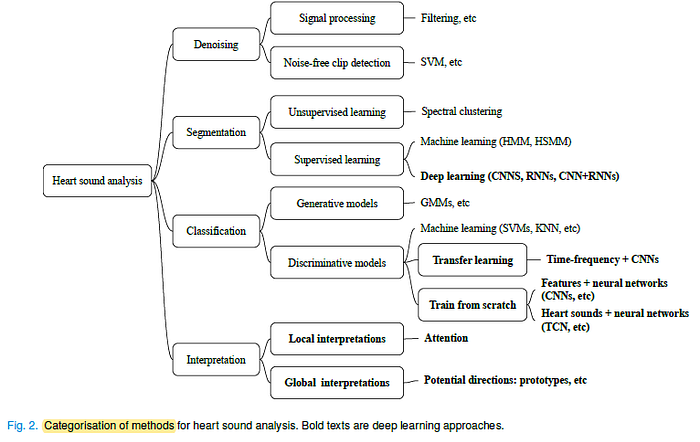

1. Categories

- There are multiple hierarchical categories for heart sound classification research.

Most of the deep learning methods are focusing on the classification models.

2. DL-Based Classification Models

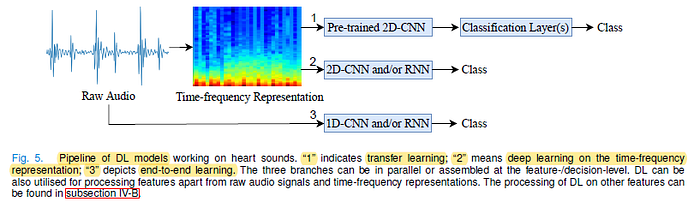

- As it is challenging to extract effective representations from raw heart sound signals, 2D time-frequency representations have been widely used as the input of 2D CNNs for heart sound classification, respectively [29], [39], [42], [49], [50], [61], [72], [73], [76], [77], [81], [115]–[119].

- 1D time-domain features can be also fed into DL models for heart sound classification.

- Typical DL models on ImageNet, such as AlexNet [135] and VGG [136], have been successfully used for heart sound classification [8], [31], [44], [137].

- In [138], pre-trained Audio Neural Networks (PANNs) trained on AudioSet were used for classifying heart sounds with inputs of time-frequency representations.

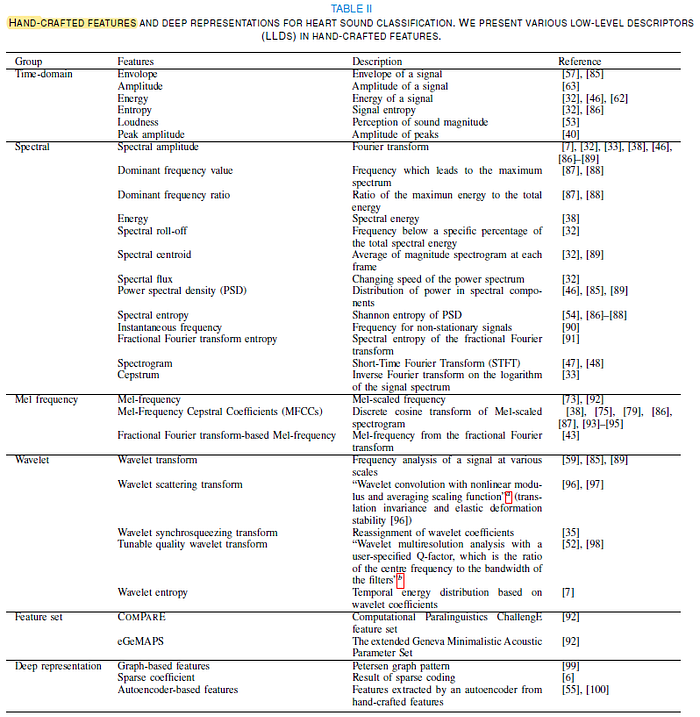

- The above tables show the research works working on Hand-Crafted Features.

3. Datasets

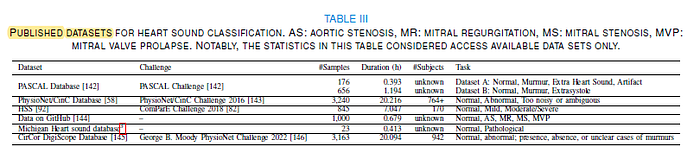

- The above table shows the available datasets.

4. Future Directions

- Compared to models without segmentation information, segmentation did not significantly improve the model performance in the experiments [149].

- There are also many approaches which proposed to use non-segmented heart sounds, therefore, the complexity of automated auscultation can be reduced [10], [44], [52], [90], [115].

- There are a few studies of hardware working on automated diagnosis more recently.

- The current research studies are mostly based on heart sounds only, while many types of individual information such as age can affect model performance [152].

- In terms of ML and DL, we are currently witnessing the advent and adoption of foundation models [153] which can be potentially used for heart sound classification.

- Human-machine classification can be a solution to combine both machines’ predictions and human’s (crowd workers’ and experts’) predictions for more precise diagnosis [154].

- (Please kindly read the paper directly for more details.)