Brief Review — Breast Ultrasound Image Classification and Segmentation Using Convolutional Neural Networks

ResNet for Classification, Mask R-CNN for Segmentation

Breast Ultrasound Image Classification and Segmentation Using Convolutional Neural Networks,

ResNet+Mask R-CNN, by Beihang University, and Capital Normal University

2018 PCM, Over 30 Citations (Sik-Ho Tsang @ Medium)

Medical Image Analysis, Image Classification, Image Segmentation

- A breast ultrasound dataset is proposed.

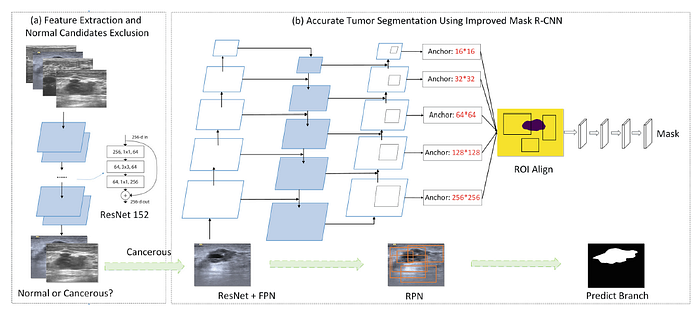

- A two-stage Computer-Aided Diagnosis (CAD) system using ResNet and Mask R-CNN is then proposed.

Outline

- Proposed Breast Ultrasound Dataset

- Proposed Two-Stage Computer-Aided Diagnosis (CAD) System

- Results

1. Proposed Breast Ultrasound Dataset

- The breast ultrasound images are collected from XiangYa Hospital of Hunan Province in 2016 and 2017. The dataset contains a total of 2600 images with a mean image size of 390×443 pixels.

- 1182 and 1418 images are with and without tumor area, respectively. From the 1182 cancerous images (collected from 394 patients), 890 were invasive ductal carcinomas, 164 were invasive carcinomas, 73 were non-special type invasive carcinomas, and 55 were other unspecified malignant lesions. It is note that one or more tumors in each cancerous image.

2. Proposed Two-Stage Computer-Aided Diagnosis (CAD) System

2.1. Classification

- ResNet is used to classify whether the image is normal or cancerous.

- Normal images can be excluded before tumor segmentation.

2.2. Segmentation

- Mask R-CNN is modified.

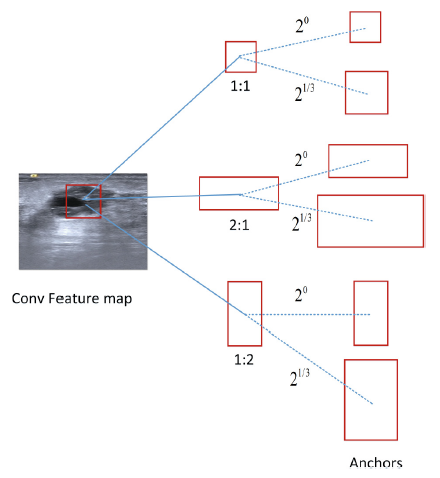

- Owing to the huge difference over object scales in the candidate images (lesions range in size from 16×10 to 956×676), anchors with different scales and ratios are taking into account (illustrated in Fig. 2(b)), where the size of anchors are modified to (16×16, 32×32, 64×64, 128×128, 256×256) correspondingly.

- Two anchors sizes of 2⁰ and 2^(1/3) of the original set of 3 aspect ratio anchors at each level are added. Thus, there are 6 anchors per level and across levels, they cover the scale range 16–322 pixels with respect to the resized input.

2. Results

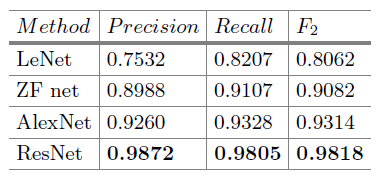

2.1. Classification

ResNet method achieves the highest Precision (95.84%), Recall (99.41%) and F2 measure (99.23%) among these four classification methods.

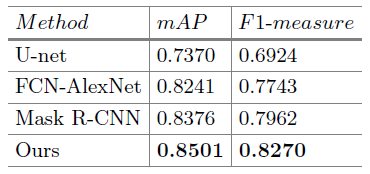

2.2. Segmentation

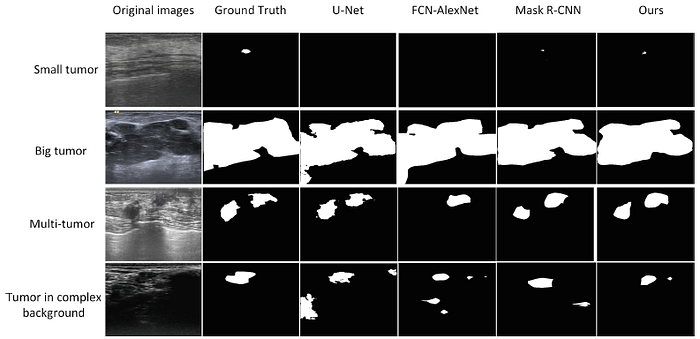

The modified Mask R-CNN has much higher mAP (85.0% vs. 83.8%) and F1-measure (82.7% vs. 79.6%) than the original Mask R-CNN method benefit from using more anchor scales.

As shown above, the modified Mask R-CNN achieves the better segmentation results in most instances, even in complex environment with large background noise.

Reference

[2018 PCM] [ResNet+Mask R-CNN]

Breast Ultrasound Image Classification and Segmentation Using Convolutional Neural Networks

1.9. Biomedical Image Classification

2017 … 2018 [ResNet+Mask R-CNN] … 2021 [MICLe] [MoCo-CXR] [CheXternal] [CheXtransfer] [Ciga JMEDIA’21]

1.10. Biomedical Image Segmentation

2015 … 2018 [ResNet+Mask R-CNN] … 2021 [Ciga JMEDIA’21]