Brief Review — Rectified Linear Units Improve Restricted Boltzmann Machines

Rectified Linear Unit (ReLU) Introduced

Rectified Linear Units Improve Restricted Boltzmann Machines

ReLU, by University of Toronto

2010 ICML, Over 17000 Citations (Sik-Ho Tsang @ Medium)

Activation Function, Restricted Boltzmann Machine, Image Classification, Face Recognition

- Rectified Linear Unit (ReLU) is introduced, which outperforms Sigmoid.

- This is a paper from Hinton’s research group.

Outline

- Rectified Linear Unit (ReLU)

- Image Classification Results

- Face Recognition Results

1. Rectified Linear Unit (ReLU)

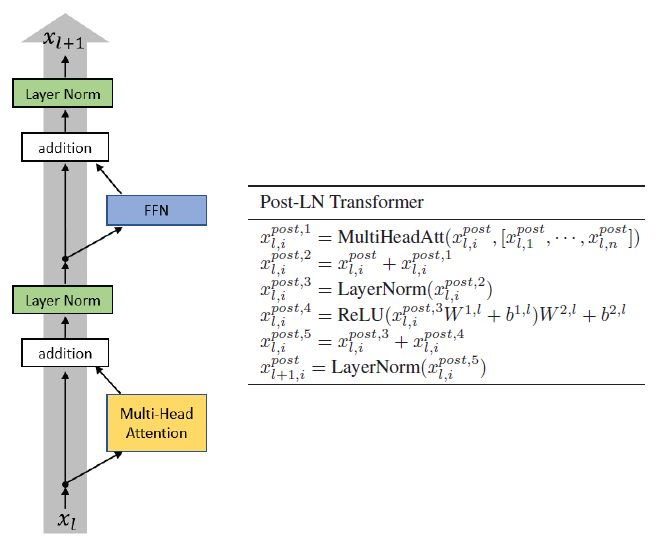

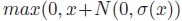

- More precisely, Noisy ReLU is proposed to replace the logistic sigmoid function needs to be used many times to get the probabilities required for sampling an integer value correctly:

- where N(0, V) is Gaussian noise with zero mean and variance V.

2. Image Classification Results

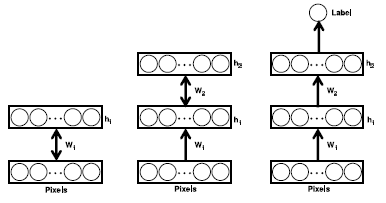

- Two hidden layers of NReLUs as RBMs, are greedily pretrained. (For RBM, please read Autoencoder.)

- The class label is represented as a K-dimensional binary vector with 1-of-K activation, where K is the number of classes.

- The classifier computes the probability of the K classes from the second layer hidden activities h2 using the softmax function.

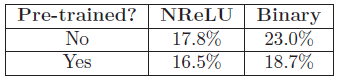

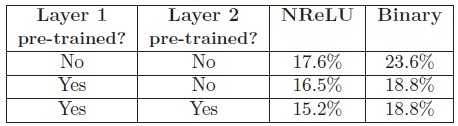

- Pre-training helps improve the performance of both unit types.

- But NReLUs without pre-training are better than binary units with pre-training.

- Pre-training both layers gives further improvement for NReLUs but not for binary units.

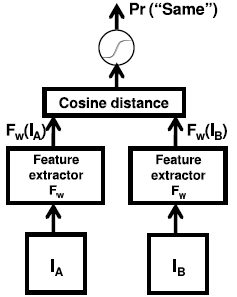

3. Face Recognition Results

- The feature extractor FW contains one hidden layer of NReLUs pre-trained as an RBM. (For RBM, please read Autoencoder.)

- Cosine distance is used to check whether the faces are the same.

- Models using NReLUs are more accurate.

This paper and AlexNet are often cited when ReLU is used. Classic!

Reference

[2010 ICML] [ReLU]

Rectified Linear Units Improve Restricted Boltzmann Machines

Image Classification

1989 … 2010 [ReLU] … 2022 [ConvNeXt] [PVTv2]

Face Recognition

2005 [Chopra CVPR’05] 2010 [ReLU] 2014 [DeepFace] [DeepID2] [CASIANet] 2015 [FaceNet] 2016 [N-pair-mc Loss]