[Paper] IQA-CNN++: Simultaneous Estimation of Image Quality and Distortion (Image Quality Assessment)

Outperforms IQA-CNN With 90% Fewer Number of Parameters

In this story, Simultaneous estimation of image quality and distortion via multi-task convolutional neural networks (IQA-CNN+ & IQA-CNN++), by University of Maryland, SONY US Research Center, and NICTA and ANU, is presented. This is an extended version of IQA-CNN (2014 CVPR). In this paper:

- It is believed that simply appending additional tasks based on the state of the art structure does not lead to optimal solutions.

- A compact structure is designed with nearly 90% fewer parameters compared to IQA-CNN.

This is a paper in 2015 ICIP with over 70 citations. (Sik-Ho Tsang @ Medium)

Outline

- Brief Review of IQA-CNN (2014 CVPR)

- IQA-CNN+

- IQA-CNN++

- Experimental Results

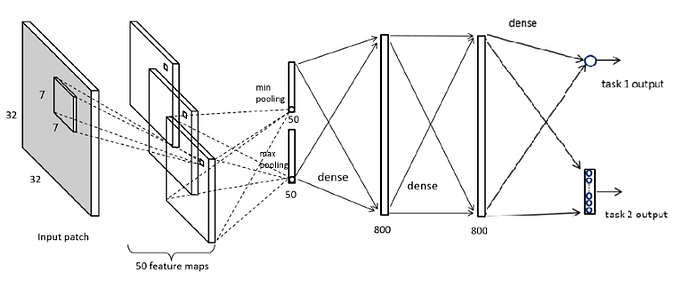

1. Brief Review of IQA-CNN (2014 CVPR)

1.1. Network Architecture

- The image patch is firstly normalized before input into the network.

- In the convolution layer, the locally normalized image patches are convolved with 50 filters. There are no activation after the convolution.

- Each feature map is pooled into one max value and one min value, i.e. the global max pooling and global min pooling respectively.

- Then, the first fully connected layer takes an input of size 2×K.

- ReLU is used in two fully connected layers.

1.2. Training & Testing

- Non-overlapping 32×32 patches are taken from large images.

- For training , each patch is assigned a quality score as its source image’s ground truth score.

- L1 norm is used as the loss function.

- For testing, the predicted patch scores are averaged for each image to obtain the image level quality score.

2. IQA-CNN+

- IQA-CNN+ is a naive extension of IQA-CNN.

- Identifying the distortion type is an important part for NR-IQA present in an image. It will be a much better description if both the distortion type and quality score are determined.

- A multi-task variant by directly adding a minor task in the output layer, as a baseline.

- The structure for the multi-task is extended by adding a classification layer for classifying the distortion type.

- However, there are 3 folds that the network is not ideal:

- IQA-CNN+ has a shallow convolutional structure (one layer), which makes the filter learning less efficient compared to deeper structures.

- Too many parameters to be learnt.

- The arrangement of the fully connected layers may not facilitate multiple tasks.

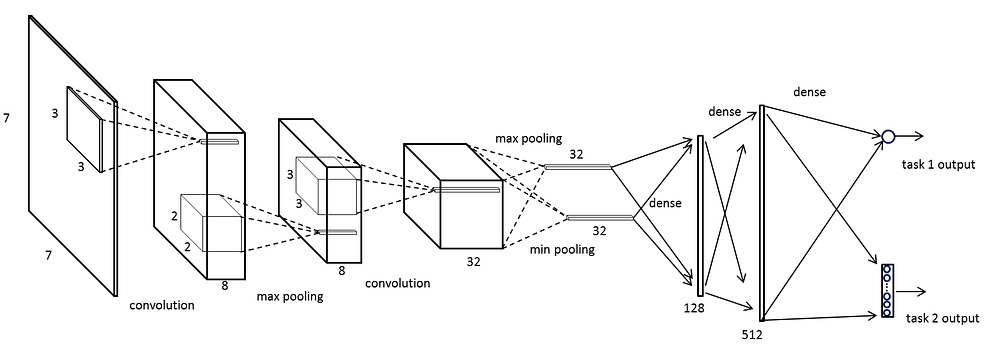

3. IQA-CNN++

- Two modifications are made:

- Increasing the number of convolutional layers while reducing the receptive field of the filters

- Modifying the fully connected layers to have a “fan-out” shape with significantly fewer neurons.

3.1. Network Architecture

- The first convolutional layer contains 8 kernels each of size 3×3, followed by a 2×2 pooling. The second convolutional layer contains 32 kernels, each of size 3×3×8.

- The 32 feature maps obtained by the second convolutional layer are pooled to 32 max and 32 min values, which form 64 inputs for the next layer.

- Again, no nonlinear neurons are used in the convolutional layers.

- There are 128 and 512 Rectified Linear Units (ReLUs) in the two fully connected layers respectively.

- Both the linear regression layer and logistic regression layer exist in the last part of the multi-task network.

3.2. Loss Function

- Loss of the primary task is the l1 norm of the prediction error, and the loss of secondary task is the negative log likelihood.

3.3. Model Size

- Originally, IQA-CNN (and IQA-CNN+) has approximately 7.2×10⁵ learnable parameters (weights of neurons).

- By comparison our IQA-CNN++ consists of roughly 7.7×10⁴ learnable parameters, which reduces the model size by 90%.

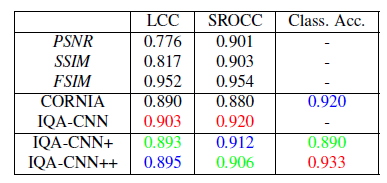

4. Experimental Results

4.1. LIVE

- The proposed multi-task CNNs (IQA-CNN+ and IQA-CNN++) outperformed the non-CNN based methods.

- For the distortion identification task, both multi-task CNNs achieved much higher accuracy. Compared with the state of the art, the gains are approximately 5% and 8% respectively. IQA-CNN++ achieves the best performance (0.951).

My Opinion: All IQA-CNN variants obtain similar results !!

The additional distortion identification (classification part) in IQA-CNN+ doesn’t help for the improvement as we can see from the results of IQA-CNN and IQA-CNN+.

The main contribution is the parameter reduction at the fully connected layers in IQA-CNN++ which makes the model less prone to overfitting for the results below.

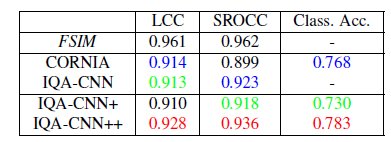

4.2. TID2008

- Similar observation is shown on the dataset TID2008.

4.3. Cross Dataset Test

- Training and validation are performed on LIVE, and then the obtained model is tested on TID2008 and CSIQ without parameter adaptation.

- Only 4 distortions are considered, that are common to all the three datasets, namely JPEG2K, JPEG, WN, and BLUR.

- IQA-CNN++ trained on LIVE achieves an LCC/SORCC of 0.895/0.906 on TID2008, and 0.928/0.936 on CSIQ, outperforming other methods.

Reference

[2015 ICIP] [IQA-CNN++]

Simultaneous estimation of image quality and distortion via multi-task convolutional neural networks

Image Quality Assessment

[IQA-CNN] [IQA-CNN++] [DeepSim] [DeepIQA]