Review — Scene Parsing through ADE20K Dataset (Semantic Segmentation)

Cascade-SegNet & Cascade-DilatedNet is Formed Using Cascade Segmentation Module, Outperforms DilatedNet, SegNet & FCN

In this story, Scene Parsing through ADE20K Dataset, (Cascade-SegNet & Cascade-DilatedNet), by Massachusetts Institute of Technology, and University of Toronto, is briefly reviewed. In this paper:

- Cascade Segmentation Module is proposed to parse a scene into stuff, objects, and object parts in a cascade and improve over the baselines.

- This module is integrated with SegNet and DilatedNet to form the Cascade-SegNet and Cascade-DilatedNet respectively.

This is a paper in 2017 CVPR with over 1000 citations. (Sik-Ho Tsang @ Medium)

Outline

- Cascade Segmentation Module

- Experimental Results

1. Cascade Segmentation Module

- While the frequency of objects appearing in scenes follows a long-tail distribution, the pixel ratios of objects also follow such a distribution.

For example, the stuff classes like ‘wall’, ‘building’, ‘floor’, and ‘sky’ occupy more than 40% of all the annotated pixels, while the discrete objects, such as ‘vase’ and ‘microwave’ at the tail of the distribution, occupy only 0.03% of the annotated pixels.

Because of the long-tail distribution, a semantic segmentation network can be easily dominated by the most frequent stuff classes.

- Semantic classes of the scenes into three macro classes: stuff (sky, road, building, etc), foreground objects (car, tree, sofa, etc), and object parts (car wheels and door, people head and torso, etc).

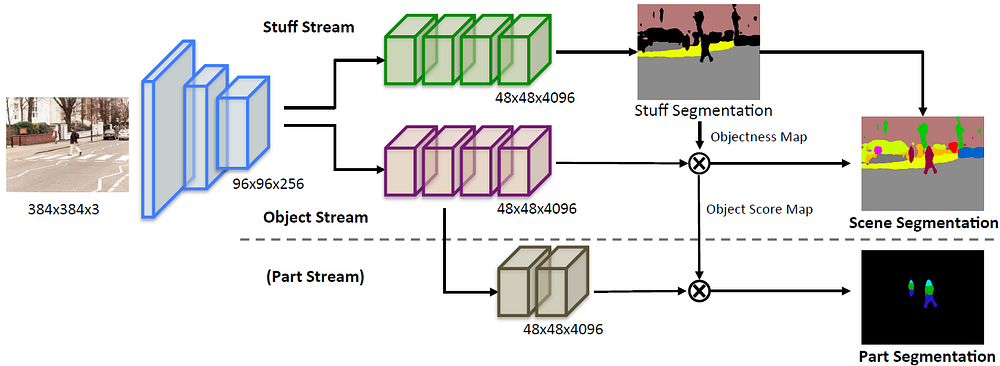

- Different streams of high-level layers are used to represent different macro classes and recognize the assigned classes, as shown above.

- More specifically, the stuff stream is trained to classify all the stuff classes plus one foreground object class.

- The object stream is trained to classify the discrete objects.

- The part stream further segments parts on each object score map predicted from the object stream.

- Each stream has a training loss at the end.

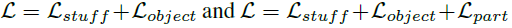

- The network with the two streams (stuff+objects) or three streams (stuff+objects+parts) could be trained end-to-end:

- The streams share the weights of the lower layers.

- This proposed module is integrated on two baseline networks SegNet and DilatedNet.

2. Experimental Results

2.1. Objective Evaluation

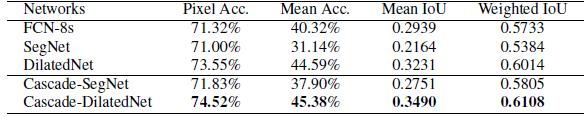

- The top 150 objects ranked by their total pixel ratios are selected from the ADE20K dataset and used to build a scene parsing benchmark of ADE20K, termed as MIT SceneParse150.

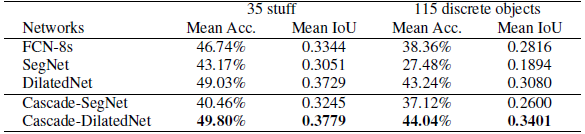

- Among the baselines, the DilatedNet achieves the best performance on the SceneParse150.

- The cascade networks, Cascade-SegNet and Cascade-DilatedNet both outperform the original baselines.

2.2. Visualization

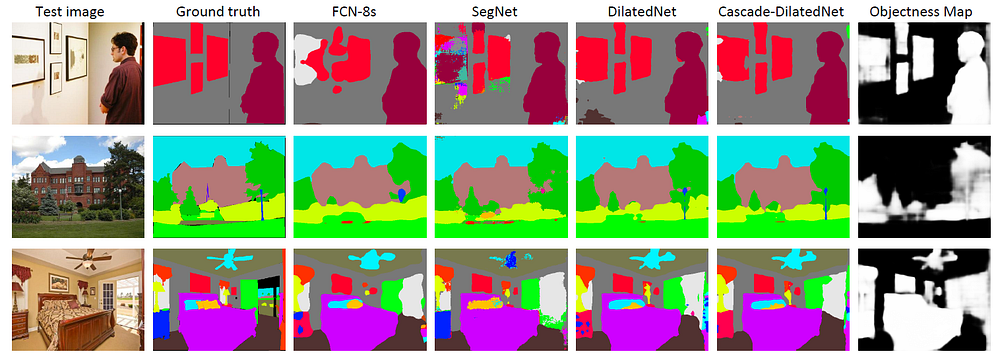

- Some examples of segmentation results are visualized above.

2.3. Possible Applications

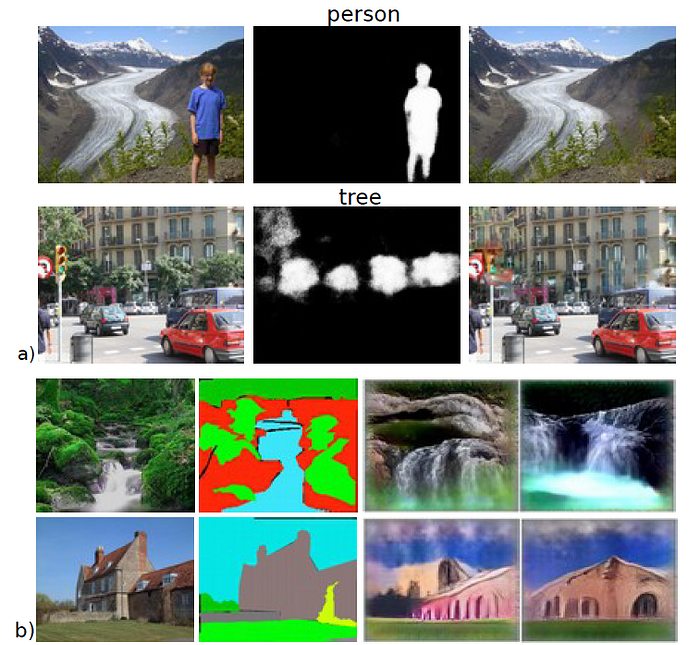

- (a) Automatic image content removal using the object score maps predicted by the scene parsing network.

- (b) Scene synthesis.

Reference

[2017 CVPR] [Cascade-SegNet & Cascade-DilatedNet]

Scene Parsing through ADE20K Dataset

Semantic Segmentation

2015: [FCN] [DeconvNet] [DeepLabv1 & DeepLabv2] [CRF-RNN] [SegNet] [DPN]

2016: [ENet] [ParseNet] [DilatedNet]

2017: [DRN] [RefineNet] [ERFNet] [GCN] [PSPNet] [DeepLabv3] [LC] [FC-DenseNet] [IDW-CNN] [DIS] [SDN] [Cascade-SegNet & Cascade-DilatedNet]

2018: [ESPNet] [ResNet-DUC-HDC] [DeepLabv3+] [PAN] [DFN] [EncNet]

2019: [ResNet-38] [C3] [ESPNetv2]

2020: [DRRN Zhang JNCA’20]