Brief Review — Bidirectional Recurrent Neural Networks

Bidirectional RNN (BRNN), One Forward, One Backward

Bidirectional Recurrent Neural Networks

Bidirectional RNN (BRNN), by ATR Interpreting Telecommunications Research Laboratory

1997 TSP, Over 7000 Citations (Sik-Ho Tsang @ Medium)

Recurrent Neural Network, RNN, Sequence Model

- A regular recurrent neural network (RNN) is extended to a bidirectional recurrent neural network (BRNN), by training it simultaneously in positive and negative time direction.

Outline

- Bidirectional Recurrent Neural Network (BRNN)

- Results

1. Bidirectional Recurrent Neural Network (BRNN)

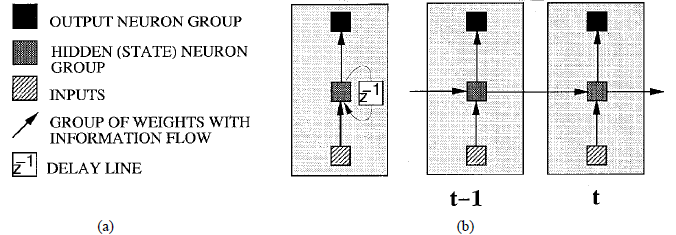

1. Conventional Uni-Directional RNN

- The above figure shows a basic RNN architecture with a delay line and unfolded in time for two time steps.

Predictions are always coming from the previous information.

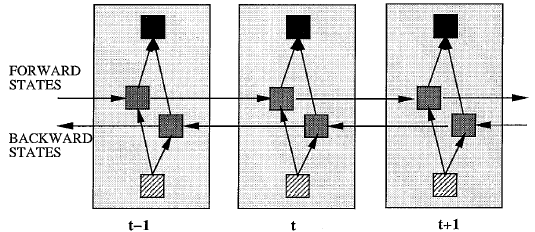

1.2. Proposed Bidirectional RNN (BRNN)

By using bidirectional RNN (BRNN), future information can also be included for prediction.

2. Results

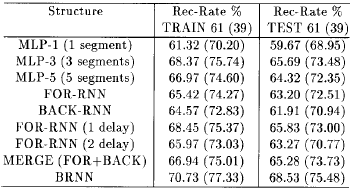

- The TIMIT phoneme database is a well-established database consisting of 6300 sentences spoken by 630 speakers.

- The training data set consisting of 3696 sentences from 462 speakers, and the test data set consisting of 1344 sentences from 168 speakers.

- The data is segment which gives 142910 phoneme segments for training and 51681 for testing.

- MERGE is the method to merge results from FOR-RNN and BACK-RNN.

BRNN outperforms all MLP and RNN.

- A more enhanced BRNN is proposed.

- First, instead of connecting the forward and backward states to the current output states, they are connected to the next and previous output states, respectively, and the inputs are directly connected to the outputs.

- Second, if in the resulting structure the first L weight connections from the inputs to the backward states and the inputs to the outputs are cut, then only discrete input information from can be used to make predictions.

The combination of the forward and backward modified BRNN structures results in much better performance than the individual structures.

It should be one of the early RNN papers proposing the bidirectional RNN idea.

Reference

[1997 TSP] [Bidirectional RNN (BRNN)]

Bidirectional Recurrent Neural Networks

Language Model / Sequence Model

1997 [Bidirectional RNN (BRNN)] 2020 [ALBERT] [GPT-3] [T5] [Pre-LN Transformer] [MobileBERT] [TinyBERT]