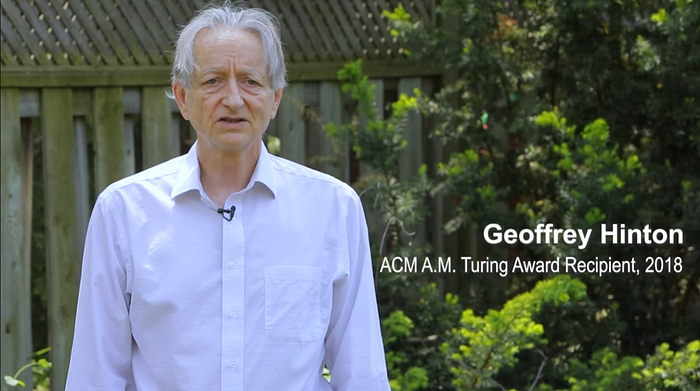

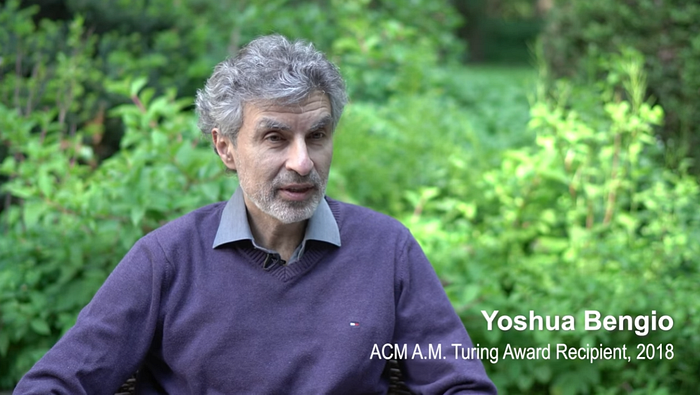

Sharing — Deep Learning for AI, By Yoshua Bengio, Yann LeCun, and Geoffrey Hinton

2018 Turing Award, 2021 Communications of the ACM

The Turing Award is often referred to as the computer science equivalent of the Nobel Prize. Yoshua Bengio, Yann LeCun, and Geoffrey Hinton are recipients of the 2018 ACM A.M. Turing Award for breakthroughs that have made deep neural networks a critical component of computing.

This time, I would like to share a ACM Communications Turing Lecture article, “Deep Learning for AI”, authored by Yoshua Bengio, Yann LeCun, and Geoffrey Hinton, with over 180 citations. (Sik-Ho Tsang @ Medium)

1. The Rise of Deep Learning

They first start by talking about what makes the rise of deep learning, such as:

- The software platform such as Caffe, TensorFlow, and PyTorch.

- The invention of deep network such as ResNet.

- Unsupervised learning using pretraining (Denoising Autoencoder).

- The mysterious success of rectified linear units (ReLU).

- The breakthrough in speech and object recognition (Deep Relief Network).

- The dramatic victory by AlexNet in the 2012 ImageNet competition.

2. Recent Advances

They also select some major recent advances to talk about:

- Soft attention and the Transformer architecture.

- Unsupervised and self-supervised learning in NLP (e.g. BERT).

- Contrastive learning (e.g. SimCLR) and Non contrastive learning (e.g. SwAV & BYOL) in computer vision.

- Variational auto-encoders (VAE).

3. The Future of Deep Learning

Despite just scaling up a model such as the language model scaling like GPT-3, what needs to be improved?

- Right now, many test cases expected to come from the same distribution as the training examples. Unfortunately, this is not the case in real world.

- Humans can generalize in a way that is different and more powerful than ordinary iid generalization: we can correctly interpret novel combinations of existing concepts, even if those combinations are extremely unlikely under our training distribution.

Our desire is to achieve greater robustness when confronted with changes in distribution (called out-of-distribution generalization).

- Evidence from neuroscience suggests that groups of nearby neurons (forming what is called a hyper-column) are tightly connected and might represent a kind of higher-level vector-valued unit able to send not just a scalar quantity but rather a set of coordinated values.

- Current deep learning is most successful at perception tasks and generally what are called system 1 tasks. Using deep learning for system 2 tasks that require a deliberate sequence of steps is an exciting area that is still in its infancy.

It seems that our implicit (system 1) processing abilities allow us to guess potentially good or dangerous futures, when planning or reasoning. This raises the question of how system 1 networks could guide search and planning at the higher (system 2) level.

- There are still many other questions raised at the end of the article. Please feel free to read the article directly.

- Finally, below is the video associated with the article, but the materials mentioned in the article and in the video are very different.

This is really a very valuable article about how Bengio, LeCun, and Hinton describe and think about AI research from history, presence, to future. I only briefly mention some points of it. If you’re interested in AI research, you will find the article super amazing, just like listening songs by your favorite singers. Hope this article can gain much larger number of citations in the future.

Reference

[2021 CACM] [Deep Learning for AI]

Deep Learning for AI

Legends

[Deep Learning for AI]