Review — The OpenNMT Neural Machine Translation Toolkit: 2020 Edition

OpenNMT Website: https://opennmt.net

The OpenNMT Neural Machine Translation Toolkit: 2020 Edition

OpenNMT: Neural Machine Translation Toolkit,

OpenNMT: Open-Source Toolkit for Neural Machine Translation, OpenNMT, by SYSTRAN, Ubiqus, and Harvard SEAS

2020 AMTA, 2018 AMTA, 2017 ACL, Over 20, 90, 1600 Citations Respectively (Sik-Ho Tsang @ Medium)

Natural Language Processing, NLP, Neural Machine Translation, NMT

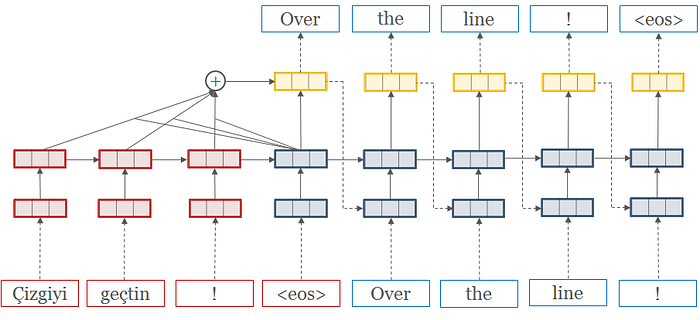

- OpenNMT is a multi-year open-source ecosystem for neural machine translation (NMT) and natural language generation (NLG).

- OpenNMT has been used in several production MT systems.

- This is a paper to introduce OpenNMT toolkit rather than a NMT method.

Outline

- OpenNMT

- Experimental Results

1. OpenNMT

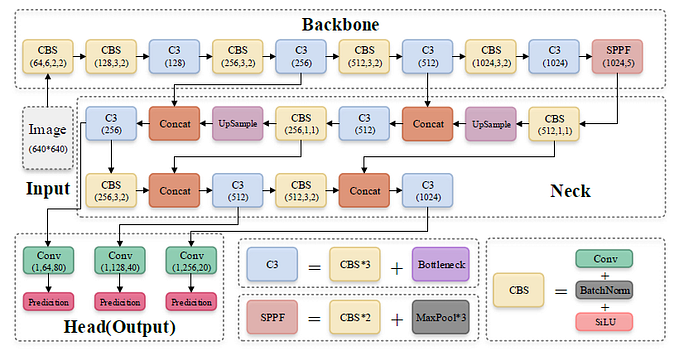

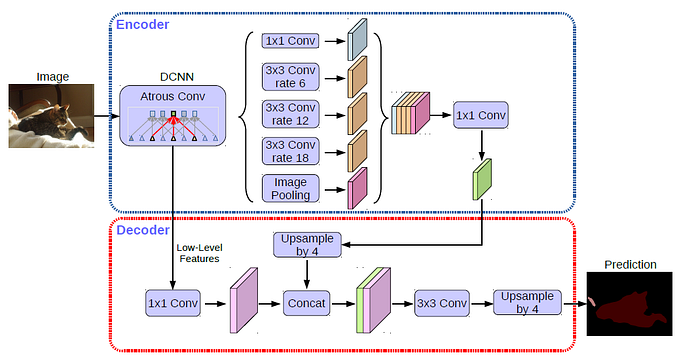

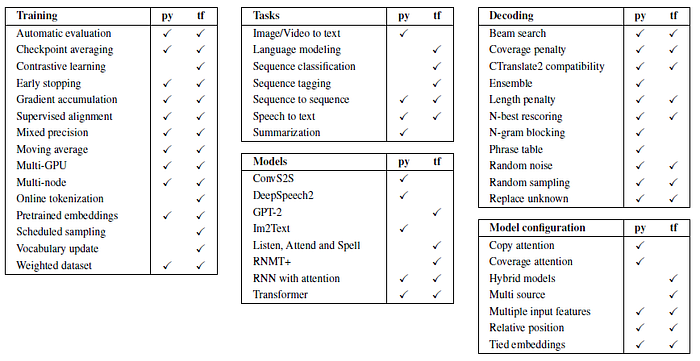

- It supports a wide range of model architectures (ConvS2S, GPT-2, Transformer, etc.) and training procedures for neural machine translation as well as related tasks such as natural language generation and language modeling.

- OpenNMT-py: A user-friendly and multimodal implementation benefiting from PyTorch ease of use and versatility.

- OpenNMT-tf: A modular and stable implementation powered by the TensorFlow 2 ecosystem.

- OpenNMT was first released in late 2016 as a Torch7 implementation. The original demonstration paper in 2017 was awarded “Best Demonstration Paper Runner-Up” at ACL 2017.

- After the release of PyTorch, the sunsetting of the Torch7 was initiated.

- After more than 3 years (2017 to 2020) of active development, OpenNMT projects have been starred by over 7,400 users. A community forum is also home of 970 users and more than 9,800 posts about NMT research and how to use OpenNMT effectively.

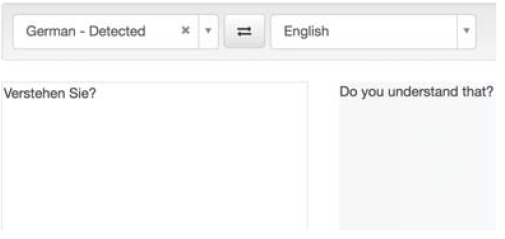

- Live demo is also developed.

- Research: OpenNMT was used for other tasks related to neural machine translation such as summarization, data-to-text, image-to-text, automatic speech recognition and semantic parsing.

- Production: OpenNMT also proved to be widespread in industry. Companies such as SYSTRAN, Booking.com, or Ubiqus are known to deploy OpenNMT models in production.

- Framework: It has been used in many frameworks such as SwissPost and BNP Paribas, while NVIDIA used OpenNMT as a benchmark for the release of TensorRT 6.

2. Experimental Results

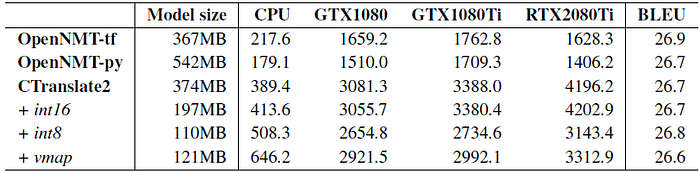

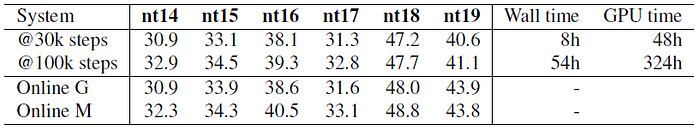

2.1. 2020 ATMA Results

- Dataset: English to German WMT19 task, with the addition of ParaCrawl v5 instead of v3.

- Tokenization: 40,000 BPE merge operations, learned and applied with Tokenizer.

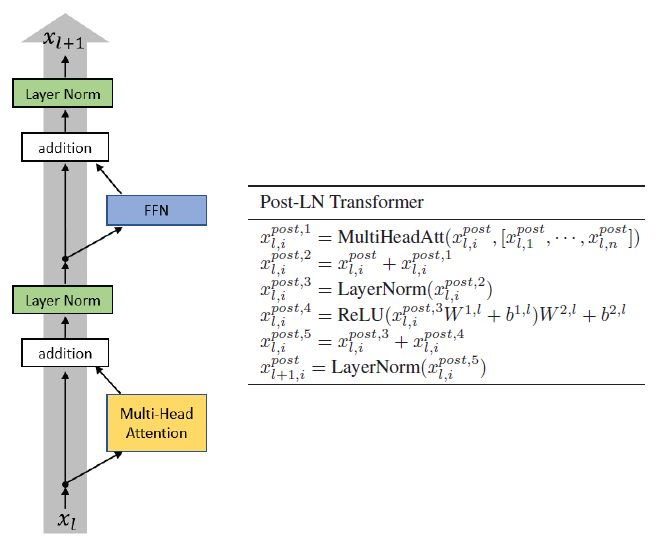

- Model: Transformer Medium (12 heads, 768 dmodel size, 3072 dff size).

- Training: Trained with OpenNMT-py on 6 RTX 2080 Ti, using mixed precision. Initial batch size is around 50,000 tokens, final batch size around 200,000 tokens.

- Inference: Shown scores are obtained with beam search of size 5 and average length penalty.

- During the WMT19 campaign, the best BLEU score for English to German was 44.9 but the best human evaluated system scored only 42.7 with an ensemble of Big Tranformers.

- OpenNMT tools allow to reach a superior performance.

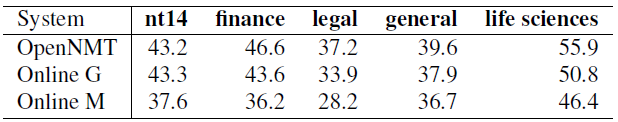

2.2. 2018 ATMA Results

References

[2020 ATMA] [OpenNMT]

The OpenNMT Neural Machine Translation Toolkit: 2020 Edition

[2018 ATMA] [OpenNMT]

OpenNMT: Neural Machine Translation Toolkit

[2017 ACL] [OpenNMT]

OpenNMT: Open-Source Toolkit for Neural Machine Translation

[Official Website] https://opennmt.net/

Machine Translation

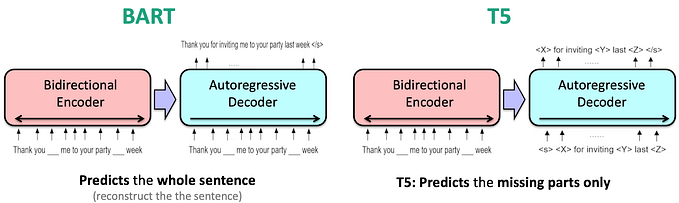

2014 [Seq2Seq] [RNN Encoder-Decoder] 2015 [Attention Decoder/RNNSearch] 2016 [GNMT] [ByteNet] [Deep-ED & Deep-Att] 2017 [ConvS2S] [Transformer] [MoE] [GMNMT] [CoVe] 2018 [Shaw NAACL’18] 2019 [AdaNorm] [GPT-2] [Pre-Norm Transformer] [FAIRSEQ] 2020 [Batch Augment, BA] [GPT-3] [T5] [Pre-LN Transformer] [OpenNMT] 2021 [ResMLP] [GPKD]